R.M. Brannon and S. Leelavanichkul

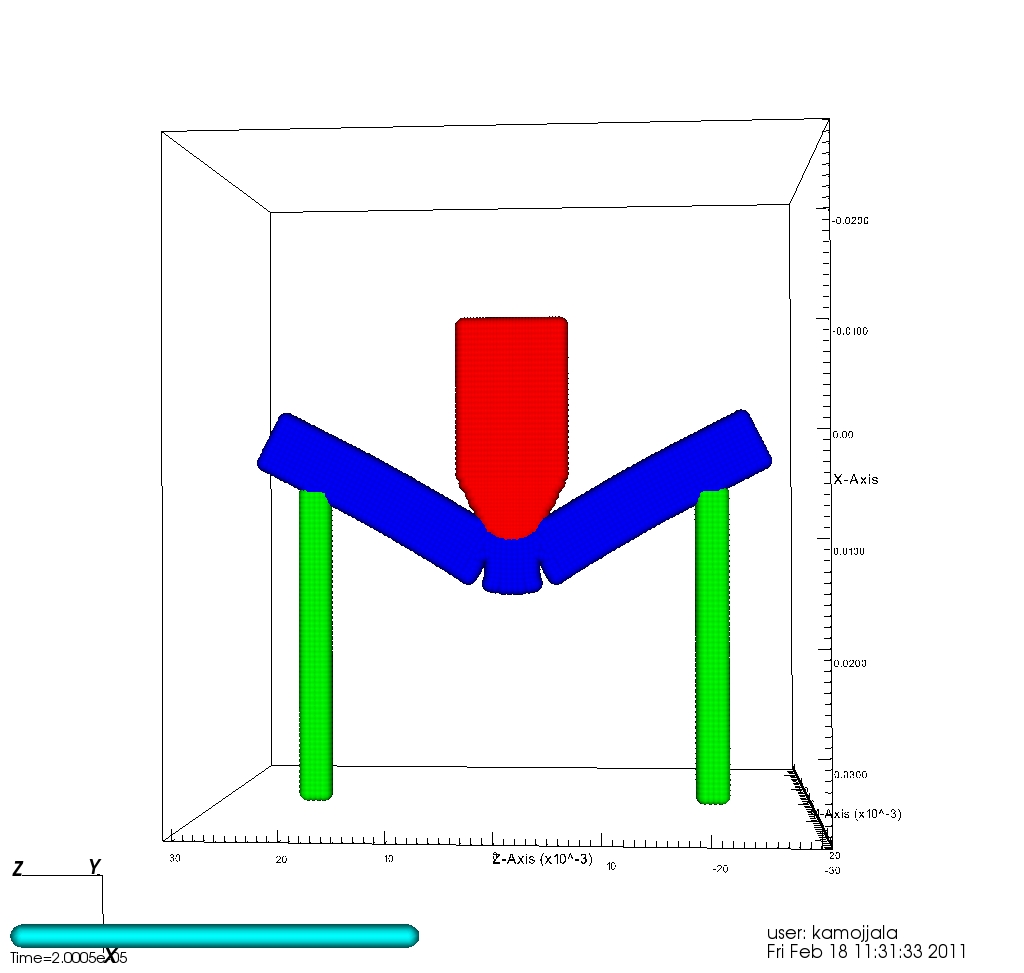

RHT Model: Contour plots of damage: side, front, and back view of the target (top to bottom).

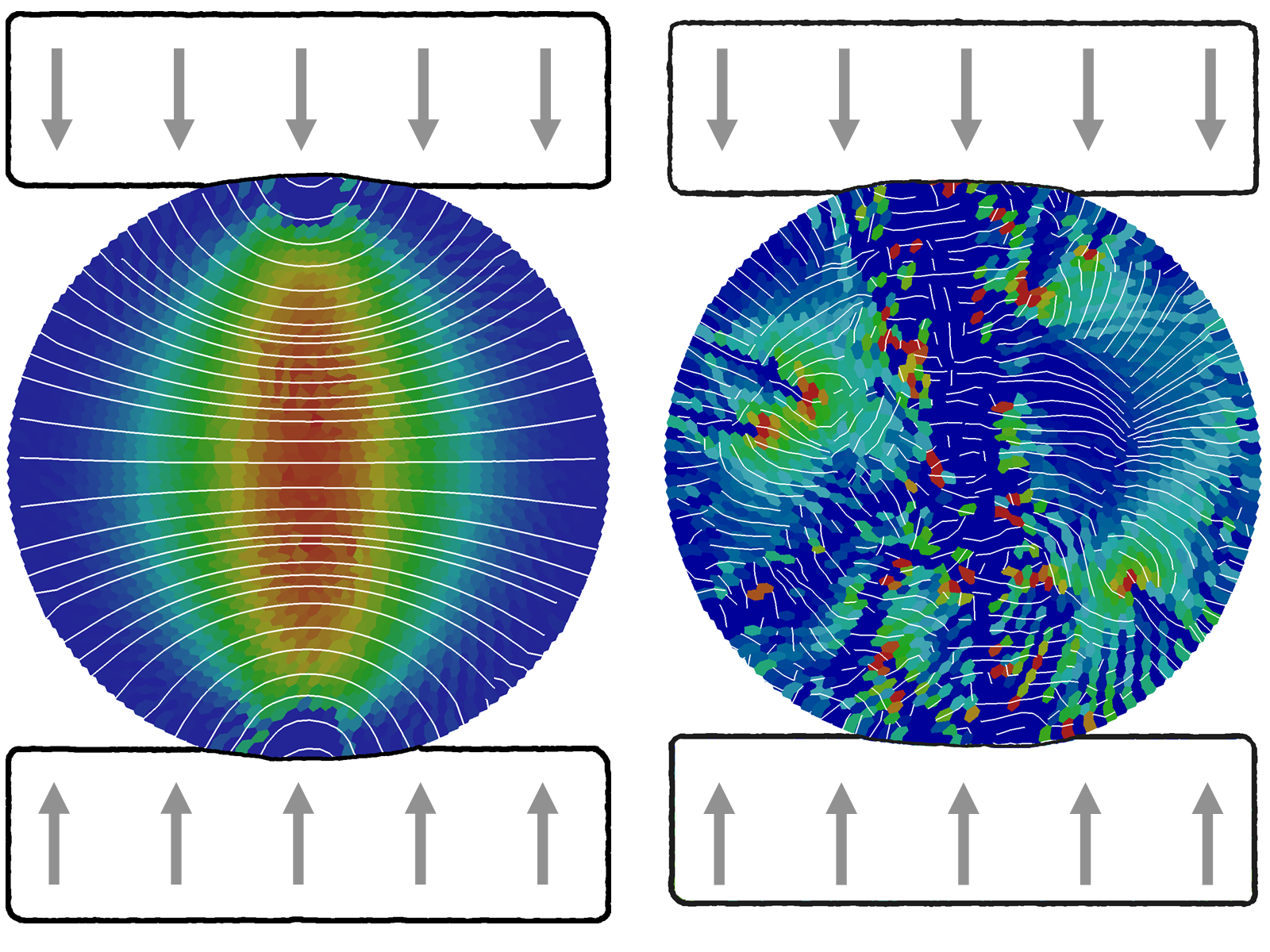

Four conventional damage plasticity models for concrete, the Karagozian and Case model (K&C),the Riedel-Hiermaier-Thoma model (RHT), the Brannon-Fossum model (BF1), and the Continuous Surface Cap Model (CSCM) are compared. The K&C and RHT models have been used in commercial finite element programs many years, whereas the BF1 and CSCM models are relatively new. All four models are essentially isotropic plasticity models for which plasticity is regarded as any form of inelasticity. All of the models support nonlinear elasticity, but with different formulations.All four models employ three shear strength surfaces. The yield surface bounds an evolving set of elastically obtainable stress states. The limit surface bounds stress states that can be reached by any means (elastic or plastic). To model softening, it is recognized that some stress states might be reached once, but, because of irreversible damage, might not be achievable again. In other words, softening is the process of collapse of the limit surface, ultimately down to a final residual surface for fully failed material. The four models being compared differ in their softening evolution equations, as well as in their equations used to degrade the elastic stiffness. For all four models, the strength surfaces are cast in stress space. For all four models, it is recognized that scale effects are important for softening, but the models differ significantly in their approaches. The K&C documentation, for example, mentions that a particular material parameter affecting the damage evolution rate must be set by the user according to the mesh size to preserve energy to failure. Similarly, the BF1 model presumes that all material parameters are set to values appropriate to the scale of the element, and automated assignment of scale-appropriate values is available only through an enhanced implementation of BF1 (called BFS) that regards scale effects to be coupled to statistical variability of material properties. The RHT model appears to similarly support optional uncertainty and automated settings for scale-dependent material parameters. The K&C, RHT, and CSCM models support rate dependence by allowing the strength to be a function of strain rate, whereas the BF1 model uses Duvaut-Lion viscoplasticity theory to give a smoother prediction of transient effects. During softening, all four models require a certain amount of strain to develop before allowing significant damage accumulation. For the K&C, RHT, and CSCM models, the strain-to-failure is tied to fracture energy release, whereas a similar effect is achieved indirectly in the BF1 model by a time-based criterion that is tied to crack propagation speed.

Available Online:

http://www.mech.utah.edu/~brannon/pubs/7-2009BrannonLeelavanichkulSurveyConcrete.pdf